Every system administrator has learning experiences that need to be shared to help others. Here are some lessons that I learned as a solo system administrator for a small growing company. I was lucky to have the following experiences before I had the chance to build my own server room from scratch.

Whoa! What Did I Get Myself Into

Quite some time ago, I started a new job as the company’s first and only system administrator. I remember the first day I was there, an overloaded circuit in the “data room” dropped all of the machines, routers, and switches and I had my first outage of my new job. When I entered the room to investigate, I noticed first that the door was wide open and as soon as I entered the room, I knew why. It had to have been 85 degrees in the room with the door shut, and the power for the room was supplied by a single 15 amp circuit that also powered the neighboring office and a printer around the corner. When I pointed out the problem, then management was kind enough to help me build my first data room.

Planning My First Server Room

We chose a room on another floor that we hadn’t expanded to yet. We supplied the door with a pretty good lock. We figured that the “bat-cave” hidden location would add to the security of the room. The room had had a 1 ton cooler installed in it years ago and it still worked great. I had a 30 amp circuit installed and had some shielded twisted pair CAT5E run into the room. I found a nice 44U rack that I could afford with the budget and even purchased a nice 5kVA Tripp Lite UPS to keep it all running during a power failure. I setup a nice CentOS / Nagios box to monitor everything. It was awesome! Particularly coming from where we had been when I had arrived. We moved our equipment in and everything was working great. The room was cool, I had plenty of power, the routine summer power failures didn’t faze me at all.

Manage Data Center Growth – Heat and Power

Things were going so good, uptime had improved, and we grew as a company. We suddenly had more and more equipment. I added a second rack with another 30amp circuit, and another UPS. Everything was good until about May the next year when the outside temperature began to rise. We hadn’t had any problems with the temperature until then, but it suddenly became a big problem. We had added several servers but during the Winter, the temperature had never been a problem. As summer came though, we suddenly understood why a past tenant had installed the room cooler. This room seemed to absorb the sun’s heat and now that I had more equipment than the year before, I couldn’t keep the room cool enough. I had to get some cooling and quick! I rented a MovinCool portable cooler from the local equipment rental place. I plugged it in and taped on a big expandable vent tube for the exhaust air. I popped out a ceiling tile and ran the exhaust hose up and as far away from the room above the tiles as I could. I also purchased some room monitoring equipment (http://avtech.com/Products/Environment_Monitors/) to alert me if the temperature got too high or if the building power went out, etc… Even with the second cooler, we were getting warm in the afternoons. I hurried down the local home depot and got some insulation board and covered up the windows. (A little note here: be careful with the insulation board. My windows had been tinted and the insulation board reflected the heat back into the window. One of the windows cracked because of the additional heat and had to be replaced. Oops!) I was hoping to get an additional permanent cooler installed, but the company was planning for a move to another location and understandably didn’t want to invest in the building. I finally took back the MovinCool unit to the rental center and picked up some Tripp Lite Portable Coolers (http://www.tripplite.com/en/products/product-series.cfm?txtSeriesID=759) that I could take to our new building with us. This pretty much resolved our cooling problem for good.

It was about this time that I also began to worry about the possibility of longer term outages. We would have these 1/2 hour power failures every month or so during the summer time. I ended up purchasing a huge generator that I could roll out into the parking lot, and created some hugh twist lock extension cords to run in to my UPS units in the event that the failures lasted longer. I lived 5 minutes away and with practice could get from the house to running the generator in about 10-15 minutes. We ended up using the generator a couple of times even though we never had a failure long enough to need it.

Data Centers Need Redundant Internet

We had continued to grow and I had to purchase additional internet lines from additional providers. We had done this to cover all of the internet needs of the employees as well as to provide redundancy for the data room. This turned into a huge bonus later on when I got paged in the middle of the night that one of my ISPs was down. I called up the ISP and they agreed that it was down, but I was the only customer affected. They told me to check my equipment. I showed up at the office and confirmed that my equipment was fine and called them back. The tech came to investigate and found the problem. The local Taco Time restaurant near the street had been robbed and in an attempt to stop any alarms from contacting the authorities had taken his buck knife to the twisted pair pedestal outside the store. The copper was everywhere and it would take the poor tech all day to put Humpty Dumpty back together again. Luckily, I had gotten a second line and we had stayed up.

Considerations When Planning a Server Room

All of the above experiences helped give me some great experience for when we finally moved and I needed to build out our new data room. Here are some considerations I took into consideration as I built out the new data room:

- Anti-Static Flooring – Hey, if you are building out a room, you might as well get anti-static tiles and glue adhesive to eliminate static in the room.

- Humidity – Many coolers will eliminate humidity from the air, by adding a humidifier you can help control static electricity in your server room.

- Cooling – You can never get enough! Heat kills machines, causes hard drives failures, etc… And if you get just barely enough cooling, the unit(s) will be working at its limit and could cause more failures and you can’t grow. If you can afford it, get two separate units so that you can perform maintenance on them. As you plan your cooling system, remember that servers intake air from the front of the machine and expel the hot air out the back making a cold aisle and a hot aisle. Plan your air ducts so that the air is dropped down right in front of your machines and the return air collecting the air from the back. Make sure that all racks have cold air in front of them. Some server rooms will block airflow from the cold to hot aisle so that the cold air has to run through the machines. This is the most efficient method. Be sure to have a couple of portable coolers around too just in case.

- Choose an Interior Room Location – My experience with the sun heating my server room made me realize that the location of the room makes a huge difference. An interior room could give you more security too. You may want to place it near your elevators or loading dock so that you can get your large and bulky equipment in easier.

- Big Doors – Server Racks can be tall. Make sure that your doors are tall and wide enough for the largest equipment and then some.

- Environmental Monitor – If you are planning on putting thousands of dollars of equipment into a room, you should spend a little money on monitoring your rooms environment. You can get monitoring units that monitor multiple temperature locations, humidity, power, etc… Get a good unit that will alert you when thresholds are crossed.

- Adequate Redundant Power with Backup Generator – This is obvious. Your server room could grow and you need to be sure that you have plenty of power for it –at all times. If your building doesn’t have a backup generator, can you add one for your server room? If your building does have one, does it fail-over instantly or does it take some time for fail-over to occur? What battery power is required to mitigate the fail-over time?

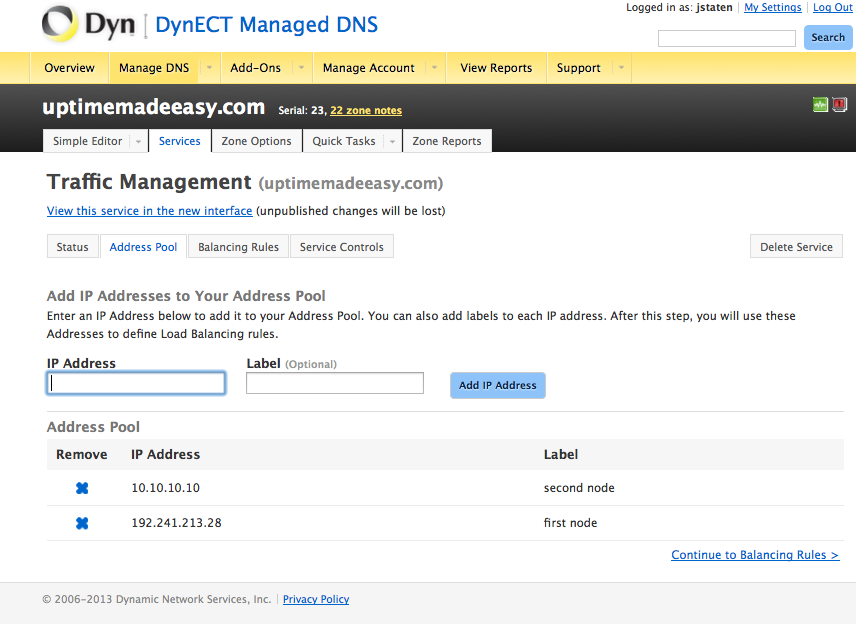

- Redundant Internet – Every ISP will have a failure at some point. Whether it is degraded performance or just plain outages, you will want to have multiple ISPs to give your systems multiple paths to the internet. If you don’t have a spectacular network administrator to offer you a BGP routing solution, think about deploying a link balancer solution (see Uptime Made Easy article: Choose a Link Balancer) for more information.

- Internal and External Monitoring Systems – You will want to implement a strategy to alert you of failures, temperature issues, down systems, full file systems, etc… You will want to have some external monitoring also to tell you if your websites and other external facing systems are available externally.

- Controlled Room Access – You will want to control who has access to your server room. With a card-key access system you can see who accessed the room when and keep those out who shouldn’t be in there. Keep out others who want to use your server room for storing their old filing cabinets, company Christmas tree decorations, etc… This is your server room. Make sure that you still have a way in if the card-key access system is not working!

- Ladder Racks are great for managing tons and tons of cables – If you don’t begin managing your cables, you will have a mess. Raised floors hide the cables –which is nice, ladder racks show the cables but help you to run them in an organized manner and can look pretty cool. Color code your cables, organize them, use the right length for each machine to prevent too short or too long, etc…

- Use Vertical Metered PDUs in your Racks – Vertical PDUs give you outlets right by your server in a server rack. This in turn means that you don’t have to run power cords from each machine to power sources. Having them metered tell you how many amps are being drawn on each PDU. This will prevent you from plugging in just one more machine into an overloaded circuit. This is huge, believe me on the metered PDUs, it will save you from a problem sometime. Try not to load a circuit over half of its capacity and plug machines with redundant power supplies into 2 different circuits this way if one circuit fails you second circuit has plenty of amps left for the fail-over.

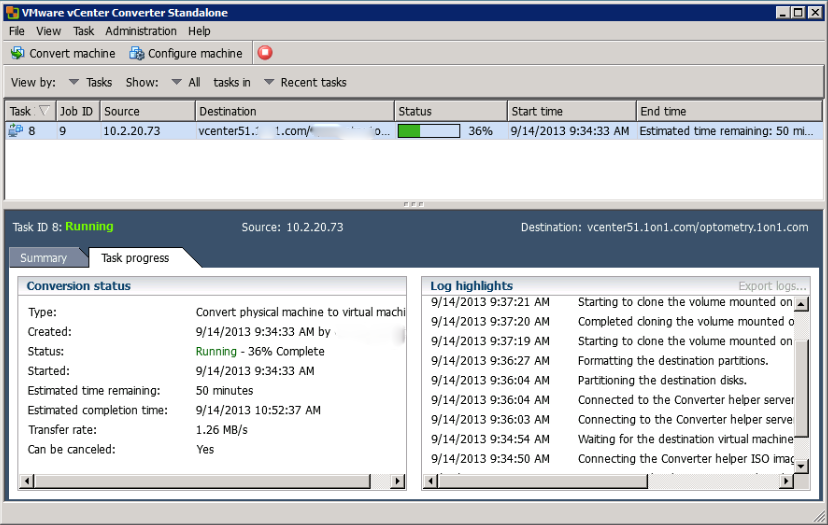

- A Second Data Center Location – No matter all of the plans you make and preparations, having another location that you can use to fail your systems over to will someday save you. I remember having a full Colo that I was using lose power for over 12 hours. Luckily, I had a second environment where I was able to send all of my traffic.

- Documentation – You’ve gone out and made a good plan for your server room. You got your power, cooling, redundant ISPs, etc… Now, you need to write it all down. Make rules for yourself about wiring, how to fail-over, etc…

Why didn’t I Just Collocate Everything in the First Place?

Now that’s a good question. Putting our server growth in a collocation would have The real reason is that we didn’t think that we would grow so fast. We went from 2 servers up to 15 bit by bit and it just grew on us. Each time we added equipment, we figured just one more server wouldn’t be a problem and we could save the Colo costs and private lines to the data center. And well, we had just had the experience of a data center failure on our minds that I wrote about above. Overall, we saved a ton of money, but paid for it by having the headaches of managing everything. Our new building was built like a Collocation facility with 4 backup generators and we had all types of fiber internet options available to us for cheap. We saved a ton of money by leveraging our building’s resources. Your situation will be different one way or another and you will need to make your own decision, but if you do end up building your own server room, I hope that my experiences will help you out.

Latest posts by Jeff Staten (see all)

- Configure Your HP Procurve Switch with SNTP - May 5, 2015

- Configuring HP Procurve 2920 Switches - May 1, 2015

- Troubleshooting Sendmail - November 28, 2014